Facebook silently enables facial recognition abilities for users outside EU and Canada

Facebook is now informing users around the world that it’s rolling out facial recognition features. In December, we reported the features would be coming to the platform; that roll out finally appears to have begun. It should be noted that users in the European Union and Canada will not be notified because laws restrict this type of activity in those areas.

With the new tools, you’ll be able to find photos that you’re in but haven’t been tagged in; they’ll help you protect yourself against strangers using your photo; and Facebook will be able to tell people with visual impairments who’s in their photos and videos. By default, Facebook warns that this feature is enabled but can be switched off at any time; additionally, the firm says it may add new capabilities at any time.

While Facebook may want its users to “feel confident” uploading pictures online, it will likely give many other users the heebie-jeebies when they think of the colossal database of faces that Facebook has and what it could do with all that data. Even non-users should be cautious which photos they include themselves in if they don’t want to be caught up in Facebook’s web of data.

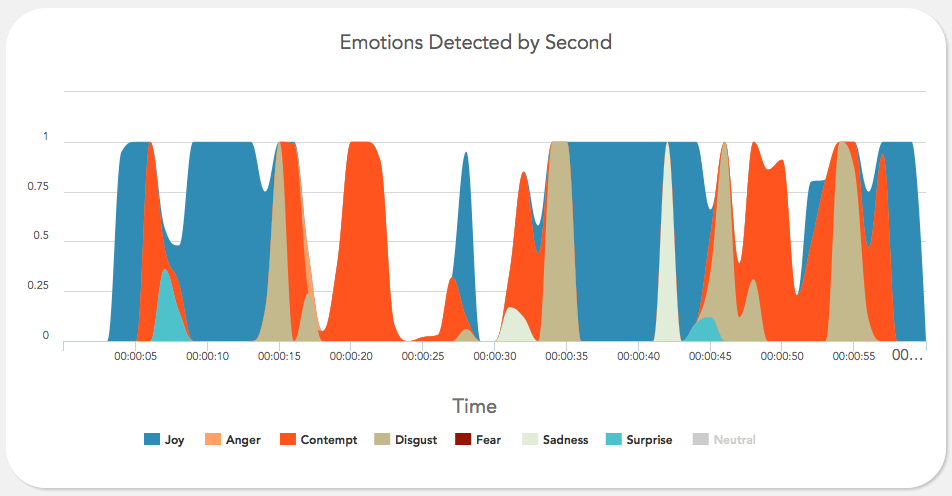

“Right now, in a handful of computing labs scattered across the world, new software is being developed which has the potential to completely change our relationship with technology. Affective computing is about creating technology which recognizes and responds to your emotions. Using webcams, microphones or biometric sensors, the software uses a person’s physical reactions to analyze their emotional state, generating data which can then be used to monitor, mimic or manipulate that person’s emotions.”

“Right now, in a handful of computing labs scattered across the world, new software is being developed which has the potential to completely change our relationship with technology. Affective computing is about creating technology which recognizes and responds to your emotions. Using webcams, microphones or biometric sensors, the software uses a person’s physical reactions to analyze their emotional state, generating data which can then be used to monitor, mimic or manipulate that person’s emotions.”