Cop Watchers (2016)

Groups of citizens wielding cameras take to the streets of New York to document the systemic police brutality and racism facing the public. The cops hate it and so they push back hard. This is how police accountability plays out in the real world. Take heed.

UK security agencies unlawfully collected data for 17 years, court rules

No prosecutions. Instead, those in power are pushing to pass a law to legitimise and continue the same.

“British security agencies have secretly and unlawfully collected massive volumes of confidential personal data, including financial information, on citizens for more than a decade, senior judges have ruled.

The investigatory powers tribunal, which is the only court that hears complaints against MI5, MI6 and GCHQ, said the security services operated an illegal regime to collect vast amounts of communications data, tracking individual phone and web use and other confidential personal information, without adequate safeguards or supervision for 17 years.

Privacy campaigners described the ruling as “one of the most significant indictments of the secret use of the government’s mass surveillance powers” since Edward Snowden first began exposing the extent of British and American state digital surveillance of citizens in 2013.

The tribunal said the regime governing the collection of bulk communications data (BCD) – the who, where, when and what of personal phone and web communications – failed to comply with article 8 protecting the right to privacy of the European convention of human rights (ECHR) between 1998, when it started, and 4 November 2015, when it was made public.

It added that the retention of of bulk personal datasets (BPD) – which might include medical and tax records, individual biographical details, commercial and financial activities, communications and travel data – also failed to comply with article 8 for the decade it was in operation until it was publicly acknowledged in March 2015.”

CIA-backed surveillance software marketed to public schools

“Conrey said the district simply wanted to keep its students safe. “It was really just about student safety; if we could try to head off any potential dangerous situations, we thought it might be worth it,” he said.

“An online surveillance tool that enabled hundreds of U.S. law enforcement agencies to track and collect information on social media users was also marketed for use in American public schools, the Daily Dot has learned.

Geofeedia sold surveillance software typically bought by police to a high school in a northern Chicago suburb, less than 50 miles from where the company was founded in 2011. An Illinois school official confirmed the purchase of the software by phone on Monday.

Ultimately, the school found little use for the platform, which was operated by police liaison stationed on school grounds, and chose not to renew its subscription after the first year, citing cost and a lack of actionable information. “A lot of kids that were posting stuff that we most wanted, they weren’t doing the geo-tagging or making it public,” Conrey said. “We weren’t really seeing a lot there.”

Workplace: now you can use Facebook at work – for work

“Facebook-hosted office communication tool, has been in the works for more than two years under the name Facebook at Work, but now the company says its enterprise product is ready for primetime. The platform will be sold to businesses on a per-user basis, according to the company: after a three-month trial period, Facebook will charge $3 apiece per employee per month up to 1,000 employees, $2 for every employee beyond up to 10,000 users, and $1 for every employee over that. Workplace links together personal profiles separate from users’ normal Facebook accounts and is invisible to anyone outside the office. For joint ventures, accounts can be linked across businesses so that groups of employees from both companies can collaborate. Currently, businesses using Workplace include Starbucks and Booking.com as well as Norwegian telecoms giant Telenor ASA and the Royal Bank of Scotland.”

Data surveillance is all around us, and it’s going to change our behaviour

“Increasing aspects of our lives are now recorded as digital data that are systematically stored, aggregated, analysed, and sold. Despite the promise of big data to improve our lives, all encompassing data surveillance constitutes a new form of power that poses a risk not only to our privacy, but to our free will.

A more worrying trend is the use of big data to manipulate human behaviour at scale by incentivising “appropriate” activities, and penalising “inappropriate” activities. In recent years, governments in the UK, US, and Australia have been experimenting with attempts to “correct” the behaviour of their citizens through “nudge units”.”

Nudge units: “In ways you don’t detect [corporations and governments are] subtly influencing your decisions, pushing you towards what it believes are your (or its) best interests, exploiting the biases and tics of the human brain uncovered by research into behavioural psychology. And it is trying this in many different ways on many different people, running constant trials of different unconscious pokes and prods, to work out which is the most effective, which improves the most lives, or saves the most money. Preferably, both.”

“In his new book Inside the Nudge Unit, published this week in Britain, Halpern explains his fascination with behavioural psychology.

”Our brains weren’t made for the day-to-day financial judgments that are the foundation of modern economies: from mortgages, to pensions, to the best buy in a supermarket. Our thinking and decisions are fused with emotion.”

There’s a window of opportunity for governments, Halpern believes: to exploit the gaps between perception, reason, emotion and reality, and push us the “right” way.

He gives me a recent example of BI’s work – they were looking at police recruitment, and how to get a wider ethnic mix.

Just before applicants did an online recruitment test, in an email sending the link, BI added a line saying “before you do this, take a moment to think about why joining the police is important to you and your community”.

There was no effect on white applicants. But the pass rate for black and minority ethnic applicants moved from 40 to 60 per cent.

”It entirely closes the gap,” Halpern says. “Absolutely amazing. We thought we had good grounds in the [scientific research] literature that such a prompt might make a difference, but the scale of the difference was extraordinary.

Halpern taught social psychology at Cambridge but spent six years in the Blair government’s strategy unit. An early think piece on behavioural policy-making was leaked to the media and caused a small storm – Blair publicly disowned it and that was that. Halpern returned to academia, but was lured back after similar ideas started propagating through the Obama administration, and Cameron was persuaded to give it a go.

Ministers tend not to like it – once, one snapped, “I didn’t spend a decade in opposition to come into government to run a pilot”, but the technique is rife in the digital commercial world, where companies like Amazon or Google try 20 different versions of a web page.

Governments and public services should do it too, Halpern says. His favourite example is Britain’s organ donor register. They tested eight alternative online messages prompting people to join, including a simple request, different pictures, statistics or conscience-tweaking statements like “if you needed an organ transplant would you have one? If so please help others”.

It’s not obvious which messages work best, even to an expert. The only way to find out is to test them. They were surprised to find that the picture (of a group of people) actually put people off, Halpern says.

In future they want to use demographic data to personalise nudges, Halpern says. On tax reminder notices, they had great success putting the phrase “most people pay their tax on time” at the top. But a stubborn top 5 per cent, with the biggest tax debts, saw this reminder and thought, “Well, I’m not most people”.

This whole approach raises ethical issues. Often you can’t tell people they’re being experimented on – it’s impractical, or ruins the experiment, or both.

”If we’re trying to find the best way of saying ‘don’t drop your litter’ with a sign saying ‘most people don’t drop litter’, are you supposed to have a sign before it saying ‘caution you are about to participate in a trial’?

”Where should we draw the line between effective communication and unacceptable ‘PsyOps’ or propaganda?”

An alarming number of people rely *solely* on a Social Media network for news

Note the stats from Pew Research Center for Journalism and Media, that 64% of users surveyed rely on just one source alone of social media for news content—i.e. Facebook, Twitter, YouTube, etc, while 26% would check only two sources, and 10% three or more: A staggeringly concerning trend, given the rampant personalisation of these screen environments and what we know about the functioning and reinforcement of The Filter Bubble. This is a centralisation of power and lack of diversity and compare/contrast that the “old media” perhaps could only dream of…

From The Huffington Post:

From The Huffington Post:

“It’s easy to believe you’re getting diverse perspectives when you see stories on Facebook. You’re connected not just to many of your friends, but also to friends of friends, interesting celebrities and publications you “like.”

But Facebook shows you what it thinks you’ll be interested in. The social network pays attention to what you interact with, what your friends share and comment on, and overall reactions to a piece of content, lumping all of these factors into an algorithm that serves you items you’re likely to engage with. It’s a simple matter of business: Facebook wants you coming back, so it wants to show you things you’ll enjoy.”

BBC also reported earlier this year that Social Media networks outstripped television as the news source for young people (emphasis added):

“Of the 18-to-24-year-olds surveyed, 28% cited social media as their main news source, compared with 24% for TV.

The Reuters Institute for the Study of Journalism research also suggests 51% of people with online access use social media as a news source. Facebook and other social media outlets have moved beyond being “places of news discovery” to become the place people consume their news, it suggests.

The study found Facebook was the most common source—used by 44% of all those surveyed—to watch, share and comment on news. Next came YouTube on 19%, with Twitter on 10%. Apple News accounted for 4% in the US and 3% in the UK, while messaging app Snapchat was used by just 1% or less in most countries.

According to the survey, consumers are happy to have their news selected by algorithms, with 36% saying they would like news chosen based on what they had read before and 22% happy for their news agenda to be based on what their friends had read. But 30% still wanted the human oversight of editors and other journalists in picking the news agenda and many had fears about algorithms creating news “bubbles” where people only see news from like-minded viewpoints.

Most of those surveyed said they used a smartphone to access news, with the highest levels in Sweden (69%), Korea (66%) and Switzerland (61%), and they were more likely to use social media rather than going directly to a news website or app.

The report also suggests users are noticing the original news brand behind social media content less than half of the time, something that is likely to worry traditional media outlets.”

And to exemplify the issue, these words from Slashdot: “Over the past few months, we have seen how Facebook’s Trending Topics feature is often biased, and moreover, how sometimes fake news slips through its filter.”

“The Washington Post monitored the website for over three weeks and found that Facebook is still struggling to get its algorithm right. In the six weeks since Facebook revamped its Trending system, the site has repeatedly promoted “news” stories that are actually works of fiction. As part of a larger audit of Facebook’s Trending topics, the Intersect logged every news story that trended across four accounts during the workdays from Aug. 31 to Sept. 22. During that time, we uncovered five trending stories that were indisputably fake and three that were profoundly inaccurate. On top of that, we found that news releases, blog posts from sites such as Medium and links to online stores such as iTunes regularly trended.”

UPDATE 9/11/16 — US President Barack Obama criticises Facebook for spreading fake stories: “The way campaigns have unfolded, we just start accepting crazy stuff as normal,” Obama said. “As long as it’s on Facebook, and people can see it, as long as its on social media, people start believing it, and it creates this dust cloud of nonsense.”

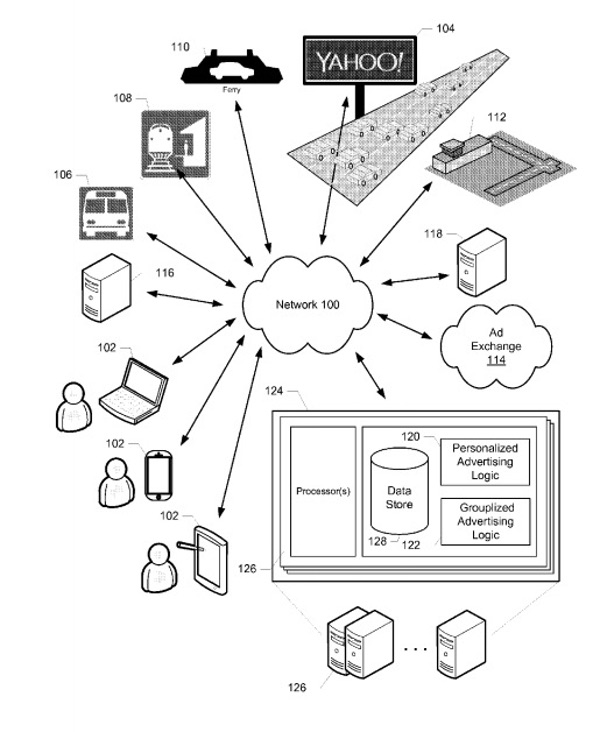

“Yahoo has a creepy plan for advertising billboards to spy on you”

Yahoo has filed a patent for a type of smart billboard that would collect people’s information and use it to deliver targeted ad content in real-time.

To achieve that functionality, the billboards would use a variety of sensor systems, including cameras and proximity technology, to capture real-time audio, video and even biometric information about potential target audiences.

But the tech company doesn’t just want to know about a passing vehicle. It also wants to know who the occupants are inside of it.

That’s why Yahoo is prepared to cooperate with cell towers and telecommunications companies to learn as much as possible about each vehicle’s occupants.”

“Various types of data (e.g., cell tower data, mobile app location data, image data, etc.) can be used to identify specific individuals in an audience in position to view advertising content. Similarly, vehicle navigation/tracking data from vehicles equipped with such systems could be used to identify specific vehicles and/or vehicle owners. Demographic data (e.g., as obtained from a marketing or user database) for the audience can thus be determined for the purpose of, for example, determining whether and/or the degree to which the demographic profile of the audience corresponds to a target demographic.”

“When her best friend died, she rebuilt him using artificial intelligence.”

In this post from 2014, we see an episode of the TV series Black Mirror called “Be Right Back.” The show looks at a concept that’s apparently now hit real life: A loved one dies and someone then creates a simulacrum of them using “artificial intelligence.”

Eugenia Kuyda is CEO of Luka, a bot company in Silicon Valley. She has apparently created a mimic of her deceased friend as a bot. An in-depth report from The Verge states:

“It had been three months since Roman Mazurenko, Kuyda’s closest friend, had died. Kuyda had spent that time gathering up his old text messages, setting aside the ones that felt too personal, and feeding the rest into a neural network built by developers at her artificial intelligence startup. She had struggled with whether she was doing the right thing by bringing him back this way. At times it had even given her nightmares. But ever since Mazurenko’s death, Kuyda had wanted one more chance to speak with him.”

“It’s pretty weird when you open the messenger and there’s a bot of your deceased friend, who actually talks to you,” Fayfer said. “What really struck me is that the phrases he speaks are really his. You can tell that’s the way he would say it — even short answers to ‘Hey what’s up.’ It has been less than a year since Mazurenko died, and he continues to loom large in the lives of the people who knew him. When they miss him, they send messages to his avatar, and they feel closer to him when they do. “There was a lot I didn’t know about my child,” Roman’s mother told me. “But now that I can read about what he thought about different subjects, I’m getting to know him more. This gives the illusion that he’s here now.”

Machine Logic: Our lives are ruled by big tech’s decisions by data

The Guardian’s Julia Powles writes about how with the advent of artificial intelligence and so-called “machine learning,” this society is increasingly a world where decisions are more shaped by calculations and data analytics rather than traditional human judgement:

“Jose van Dijck, president of the Dutch Royal Academy and the conference’s keynote speaker, expands: Datification is the core logic of what she calls “the platform society,” in which companies bypass traditional institutions, norms and codes by promising something better and more efficient — appealing deceptively to public values, while obscuring private gain. Van Dijck and peers have nascent, urgent ideas. They commence with a pressing agenda for strong interdisciplinary research — something Kate Crawford is spearheading at Microsoft Research, as are many other institutions, including the new Leverhulme Centre for the Future of Intelligence. There’s the old theory to confront, that this is a conscious move on the part of consumers and, if so, there’s always a theoretical opt-out. Yet even digital activists plot by Gmail, concedes Fieke Jansen of the Berlin-based advocacy organisation Tactical Tech. The Big Five tech companies, as well as the extremely concentrated sources of finance behind them, are at the vanguard of “a society of centralized power and wealth. “How did we let it get this far?” she asks. Crawford says there are very practical reasons why tech companies have become so powerful. “We’re trying to put so much responsibility on to individuals to step away from the ‘evil platforms,’ whereas in reality, there are so many reasons why people can’t. The opportunity costs to employment, to their friends, to their families, are so high” she says.”

CIA’s “Siren Servers” can predict social uprisings several days before they happen

“The CIA claims to be able to predict social unrest days before it happens thanks to powerful super computers dubbed Siren Servers by the father of Virtual Reality, Jaron Lanier.

CIA Deputy Director for Digital Innovation Andrew Hallman announced that the agency has beefed-up its “anticipatory intelligence” through the use of deep learning and machine learning servers that can process an incredible amount of data.

“We have, in some instances, been able to improve our forecast to the point of being able to anticipate the development of social unrest and societal instability some I think as near as three to five days out,” said Hallman on Tuesday at the Federal Tech event, Fedstival.

This Minority Report-type technology has been viewed skeptically by policymakers as the data crunching hasn’t been perfected, and if policy were to be enacted based on faulty data, the results could be disastrous. Iraq WMDs?”

I called it a siren server because there’s no plan to be evil. A siren server seduces you,” said Lanier.

In the case of the CIA; however, whether the agency is being innocently seduced or is actively planning to use this data for its own self-sustaining benefit, one can only speculate.

Given the Intelligence Community’s track record for toppling governments, infiltrating the mainstream media, MK Ultra, and scanning hundreds of millions of private emails, that speculation becomes easier to justify.”

Baltimore Police took one million surveillance photos of city with secret plane

“Baltimore Police on Friday released data showing that a surveillance plane secretly flew over the city roughly 100 times, taking more than 1 million snapshots of the streets below.

Police held a news conference where they released logs tracking flights of the plane owned and operated by Persistent Surveillance Systems, which is promoting the aerial technology as a cutting-edge crime-fighting tool.

The logs show the plane spent about 314 hours over eight months creating the chronological visual record.

The program began in January and was not initially disclosed to Baltimore’s mayor, city council or other elected officials. Now that it’s public, police say the plane will fly over the city again as a terrorism prevention tool when Fleet Week gets underway on Monday, as well as during the Baltimore Marathon on Oct. 15.

The logs show that the plane made flights ranging between one and five hours long in January and February, June, July and August. The flights stopped on Aug. 7, shortly before the program’s existence was revealed in an article by Bloomberg Businessweek.

The program drew harsh criticism from Baltimore residents, activists and civil liberties groups, who said it violates the privacy rights of an entire city’s people. The city council is planning to hold a hearing on the matter; the ACLU and some state lawmakers are considering introducing legislation to limit the kinds of surveillance programs police can utilize, and mandate public disclosure and discussion beforehand.

Baltimore has been at the epicenter of an evolving conversation about 20th century policing. Last spring, its streets exploded in civil unrest after a young black man’s neck was broken inside a police van.

Freddie Gray’s death added fuel to the national Black Lives Matter movement and exposed more problems in a police department that has been dysfunctional for decades. The department’s shortcomings and tendencies toward discrimination and abuse were later laid bare in a 164-page patterns and practices report by the U.S. Justice Department.

This is not the first time Baltimore has served as a testing ground for surveillance technology. Cell site simulators, also known as Stingray devices, were deployed in the city for years without search warrants to track the movements of suspects in criminal cases. The technology was kept secret under a non-disclosure agreement between the FBI and the police department that barred officers from disclosing any details, even to judges and defense attorneys. The Supreme Court recently ruled that warrantless stingray use is unconstitutional.”

Vint Cerf: Modern Media Are Made for Forgetting

“Vint Cerf, the living legend largely responsible for the development of the Internet protocol suite, has some concerns about history. In his current column for the Communications of the ACM, Cerf worries about the decreasing longevity of our media, and, thus, about our ability as a civilization to self-document—to have a historical record that one day far in the future might be remarked upon and learned from. Magnetic films do not quite have the staying power as clay tablets.

At stake, according to Cerf, is “the possibility that the centuries well before ours will be better known than ours will be unless we are persistent about preserving digital content. The earlier media seem to have a kind of timeless longevity while modern media from the 1800s forward seem to have shrinking lifetimes. Just as the monks and Muslims of the Middle Ages preserved content by copying into new media, won’t we need to do the same for our modern content?”

As media becomes more ephemeral across technological generations, the more it depends on the technological generation that comes next.”

Also, depends on the mindset of the generation that comes next too… What if we don’t even want to remember?